RUNS

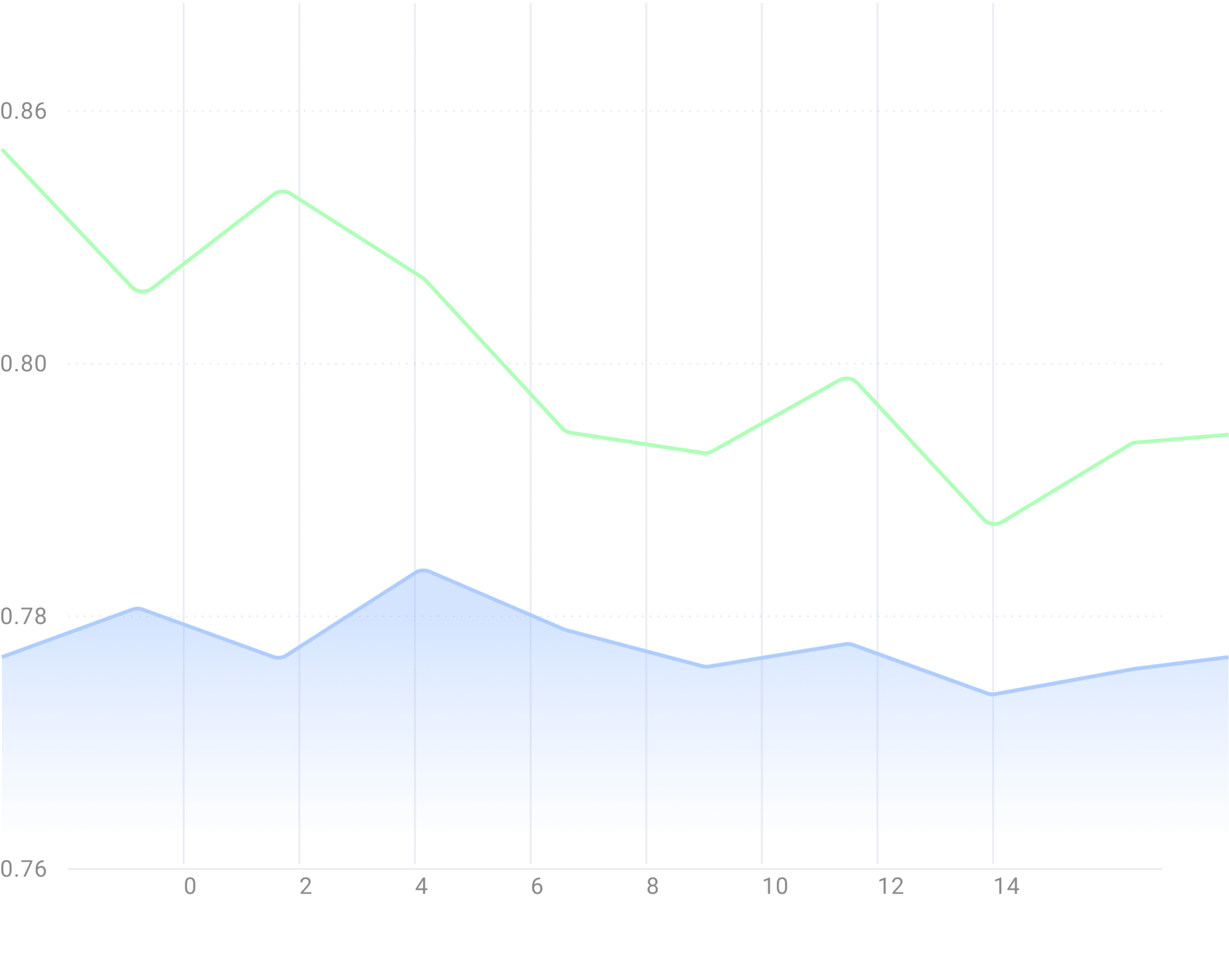

Scale your machine learning code to hundreds of GPUs and model configurations without needing to change a single line of machine learning code. Grid Runs support all major ML frameworks, enabling full hyperparameter sweeps, multi-node scaling, native logging, asset management, and interruptible pricing all out of the box without the need to modify a single line of machine learning code.

Launch a sweep nowDatastores

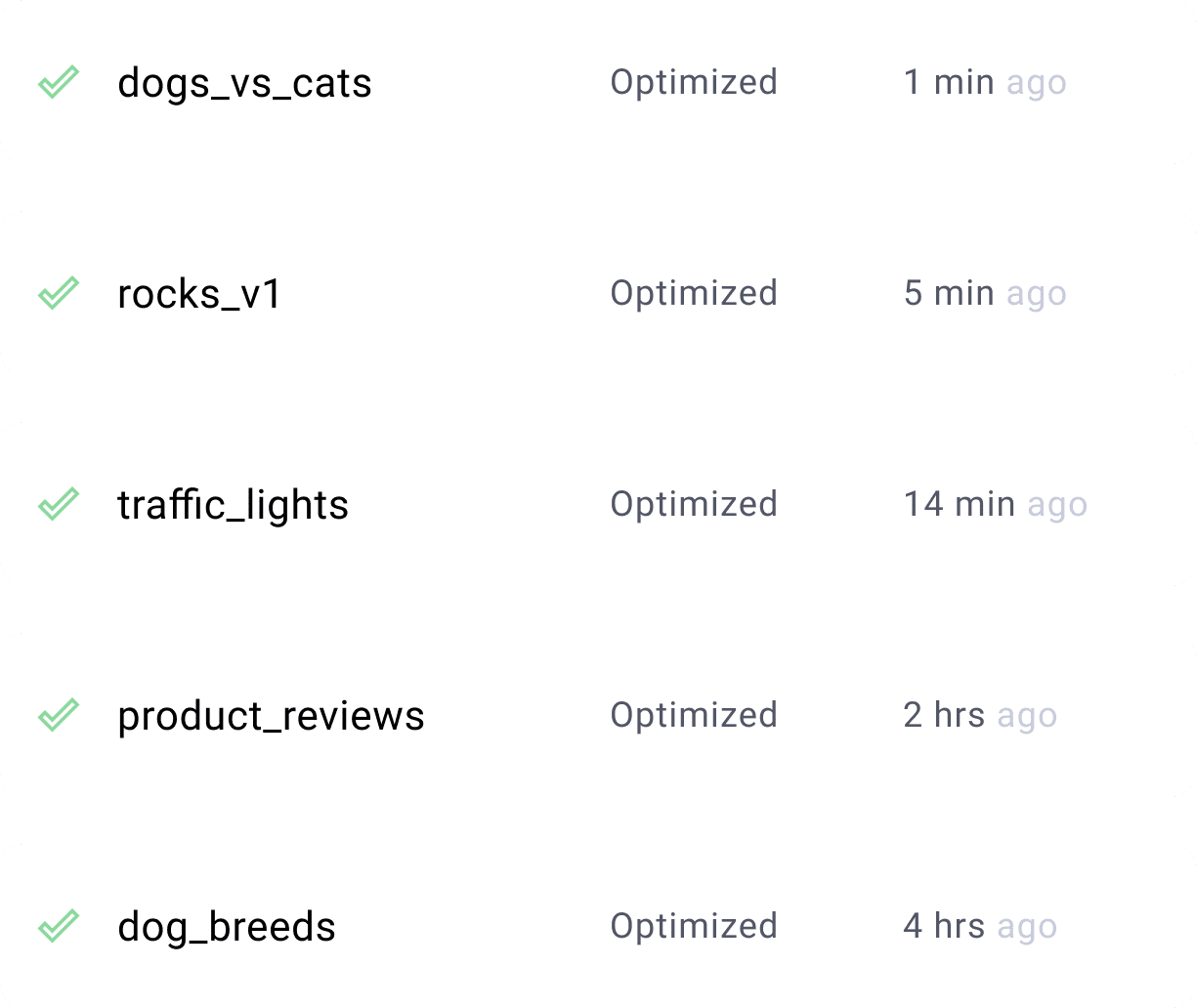

Grid Datastores enable your training code to access vast volumes of data from the cloud as if it was located on your laptop’s local filesystem. Optimized for Machine Learning operations, train at peak spead without needing to navigate the complexities of optimizing cloud storage through either the command line and Web UI.

Create a Datastore

SESSIONS

Preloaded with JupyterHub, Integrated with Github, and accessible from SSH or your IDE of choice so you can develop remotely exactly as you would on your laptop. Sessions provide a preconfigured environment enabling you to prototype on the same hardware used to scale your model through runs faster. Pay only for the compute you need (pause and resume) to get a baseline operational, then you can scale your work with runs.

Launch a SessionBenefits of the Grid Platform

Open Framework

Grid supports multiple frameworks such as PyTorch Lightning, PyTorch, TensorFlow, Keras as well as the open-source packages you love like Julia, VS Code, Horovod, Python, Optuna, or Jupyter.

Keep track of your models on the go

With mobile web support, the Grid platform makes it easy to track experiments and manage to compute on the go. Managed service running in the Grid.ai cloud or your own VPC.

Serverless Platform

Grid Enables your training code to access vast volumes of data from the cloud as if it was located on your laptop’s local filesystem.

Collaborate with your Team

Administer your team, allocate budgets and share training models on the go!

Burst and pay for what you need

On-demand compute – pay for what you need only and use capacity on demand.

Eliminate the MLOps Burden

Enable data scientists / students / researchers to develop fast and deploy faster